本文基于第 13 代英特爾 酷睿 i5-13490F 型號CPU 驗證,對于量化后模型,你只需要在16G 的筆記本電腦上就可體驗生成過程(最佳體驗為 32G 內存)。

SDXL-Turbo 是一個快速的生成式文本到圖像模型,可以通過單次網絡評估從文本提示中合成逼真的圖像。SDXL-Turbo 采用了一種稱為 Adversarial Diffusion Distillation (ADD) 的新型訓練方法(詳見技術報告),該方法可以在 1 到 4 個步驟中對大規模基礎圖像擴散模型進行采樣,并保持高質量的圖像。通過最新版本(2023.2)OpenVINO工具套件的強大推理能力及NNCF 的高效神經網絡壓縮能力,我們能夠在兩秒內實現SDXL-Turbo 圖像的高速、高質量生成。

01

環境安裝

在開始之前,我們需要安裝所有環境依賴:

%pip install --extra-index-url https://download.pytorch.org/whl/cpu torch transformers diffusers nncf optimum-intel gradio openvino==2023.2.0 onnx "git+https://github.com/huggingface/optimum-intel.git"

02

下載、轉換模型

首先我們要把huggingface 下載的原始模型轉化為OpenVINO IR,以便后續的NNCF 工具鏈進行量化工作。轉換完成后你將得到對應的text_encode、unet、vae 模型。

from pathlib import Path

model_dir = Path("./sdxl_vino_model")

sdxl_model_id = "stabilityai/sdxl-turbo"

skip_convert_model = model_dir.exists()

import os

if not skip_convert_model:

# 設置下載路徑到當前文件夾,并加速下載

os.environ['HF_ENDPOINT'] = 'https://hf-mirror.com'

os.system(f'optimum-cli export openvino --model {sdxl_model_id} --task stable-diffusion-xl {model_dir} --fp16')

os.environ['HF_ENDPOINT'] = 'https://hf-mirror.com'

tae_id = "madebyollin/taesdxl"

save_path = './taesdxl'

os.system(f'huggingface-cli download --resume-download {tae_id} --local-dir {save_path}')

import torch

import openvino as ov

from diffusers import AutoencoderTiny

import gc

class VAEEncoder(torch.nn.Module):

def __init__(self, vae):

super().__init__()

self.vae = vae

def forward(self, sample):

return self.vae.encode(sample)

class VAEDecoder(torch.nn.Module):

def __init__(self, vae):

super().__init__()

self.vae = vae

def forward(self, latent_sample):

return self.vae.decode(latent_sample)

def convert_tiny_vae(save_path, output_path):

tiny_vae = AutoencoderTiny.from_pretrained(save_path)

tiny_vae.eval()

vae_encoder = VAEEncoder(tiny_vae)

ov_model = ov.convert_model(vae_encoder, example_input=torch.zeros((1,3,512,512)))

ov.save_model(ov_model, output_path / "vae_encoder/openvino_model.xml")

tiny_vae.save_config(output_path / "vae_encoder")

vae_decoder = VAEDecoder(tiny_vae)

ov_model = ov.convert_model(vae_decoder, example_input=torch.zeros((1,4,64,64)))

ov.save_model(ov_model, output_path / "vae_decoder/openvino_model.xml")

tiny_vae.save_config(output_path / "vae_decoder")

convert_tiny_vae(save_path, model_dir)

03

從文本到圖像生成

現在,我們就可以進行文本到圖像的生成了,我們使用優化后的openvino pipeline 加載轉換后的模型文件并推理;只需要指定一個文本輸入,就可以生成我們想要的圖像結果。

from optimum.intel.openvino import OVStableDiffusionXLPipeline device='AUTO' # 這里直接指定AUTO,可以寫成CPU model_dir = "./sdxl_vino_model" text2image_pipe = OVStableDiffusionXLPipeline.from_pretrained(model_dir, device=device)

import numpy as np

prompt = "cute cat"

image = text2image_pipe(prompt, num_inference_steps=1, height=512, width=512, guidance_scale=0.0, generator=np.random.RandomState(987)).images[0]

image.save("cat.png")

image

# 清除資源占用 import gc del text2image_pipe gc.collect()

04

從圖片到圖片生成

我們還可以實現從圖片到圖片的擴散模型生成,將剛才產出的文生圖圖片進行二次圖像生成即可。

from optimum.intel import OVStableDiffusionXLImg2ImgPipeline model_dir = "./sdxl_vino_model" device='AUTO' # 'CPU' image2image_pipe = OVStableDiffusionXLImg2ImgPipeline.from_pretrained(model_dir, device=device)

Compiling the vae_decoder to AUTO ... Compiling the unet to AUTO ... Compiling the vae_encoder to AUTO ... Compiling the text_encoder_2 to AUTO ... Compiling the text_encoder to AUTO ...

photo_prompt = "a cute cat with bow tie"

photo_image = image2image_pipe(photo_prompt, image=image, num_inference_steps=2, generator=np.random.RandomState(511), guidance_scale=0.0, strength=0.5).images[0]

photo_image.save("cat_tie.png")

photo_image

05

量化

NNCF(Neural Network Compression Framework) 是一款神經網絡壓縮框架,通過對 OpenVINO IR 格式模型的壓縮與量化以便更好的提升模型在英特爾設備上部署的推理性能。

[NNCF]:

https://github.com/openvinotoolkit/nncf/

[NNCF] 通過在模型圖中添加量化層,并使用訓練數據集的子集來微調這些額外的量化層的參數,實現了后訓練量化。量化后的權重結果將是INT8 而不是FP32/FP16,從而加快了模型的推理速度。

根據SDXL-Turbo Model 的結構,UNet 模型占據了整個流水線執行時間的重要部分。現在我們將展示如何使用[NNCF] 對UNet 部分進行優化,以減少計算成本并加快流水線速度。至于其余部分不需要量化,因為并不能顯著提高推理性能,但可能會導致準確性的大幅降低。

量化過程包含以下步驟:

- 為量化創建一個校準數據集。

- 運行nncf.quantize() 來獲取量化模型。

- 使用openvino.save_model() 函數保存INT8 模型。

注:由于量化需要一定的硬件資源(64G 以上的內存),之后我直接附上了量化后的模型,你可以直接下載使用。

from pathlib import Path

import openvino as ov

from optimum.intel.openvino import OVStableDiffusionXLPipeline

import os

core = ov.Core()

model_dir = Path("./sdxl_vino_model")

UNET_INT8_OV_PATH = model_dir / "optimized_unet" / "openvino_model.xml"

import datasets

import numpy as np

from tqdm import tqdm

from transformers import set_seed

from typing import Any, Dict, List

set_seed(1)

class CompiledModelDecorator(ov.CompiledModel):

def __init__(self, compiled_model: ov.CompiledModel, data_cache: List[Any] = None):

super().__init__(compiled_model)

self.data_cache = data_cache if data_cache else []

def __call__(self, *args, **kwargs):

self.data_cache.append(*args)

return super().__call__(*args, **kwargs)

def collect_calibration_data(pipe, subset_size: int) -> List[Dict]:

original_unet = pipe.unet.request

pipe.unet.request = CompiledModelDecorator(original_unet)

dataset = datasets.load_dataset("conceptual_captions", split="train").shuffle(seed=42)

# Run inference for data collection

pbar = tqdm(total=subset_size)

diff = 0

for batch in dataset:

prompt = batch["caption"]

if len(prompt) > pipe.tokenizer.model_max_length:

continue

_ = pipe(

prompt,

num_inference_steps=1,

height=512,

width=512,

guidance_scale=0.0,

generator=np.random.RandomState(987)

)

collected_subset_size = len(pipe.unet.request.data_cache)

if collected_subset_size >= subset_size:

pbar.update(subset_size - pbar.n)

break

pbar.update(collected_subset_size - diff)

diff = collected_subset_size

calibration_dataset = pipe.unet.request.data_cache

pipe.unet.request = original_unet

return calibration_dataset

if not UNET_INT8_OV_PATH.exists(): text2image_pipe = OVStableDiffusionXLPipeline.from_pretrained(model_dir) unet_calibration_data = collect_calibration_data(text2image_pipe, subset_size=200)

import nncf

from nncf.scopes import IgnoredScope

UNET_OV_PATH = model_dir / "unet" / "openvino_model.xml"

if not UNET_INT8_OV_PATH.exists():

unet = core.read_model(UNET_OV_PATH)

quantized_unet = nncf.quantize(

model=unet,

model_type=nncf.ModelType.TRANSFORMER,

calibration_dataset=nncf.Dataset(unet_calibration_data),

ignored_scope=IgnoredScope(

names=[

"__module.model.conv_in/aten::_convolution/Convolution",

"__module.model.up_blocks.2.resnets.2.conv_shortcut/aten::_convolution/Convolution",

"__module.model.conv_out/aten::_convolution/Convolution"

],

),

)

ov.save_model(quantized_unet, UNET_INT8_OV_PATH)

06

運行量化后模型

由于量化unet 的過程需要的內存可能比較大,且耗時較長,我提前導出了量化后unet 模型,此處給出下載地址:

鏈接: https://pan.baidu.com/s/1WMAsgFFkKKp-EAS6M1wK1g

提取碼: psta

下載后解壓到目標文件夾`sdxl_vino_model` 即可運行量化后的int8 unet 模型。

從文本到圖像生成

from pathlib import Path

import openvino as ov

from optimum.intel.openvino import OVStableDiffusionXLPipeline

import numpy as np

core = ov.Core()

model_dir = Path("./sdxl_vino_model")

UNET_INT8_OV_PATH = model_dir / "optimized_unet" / "openvino_model.xml"

int8_text2image_pipe = OVStableDiffusionXLPipeline.from_pretrained(model_dir, compile=False)

int8_text2image_pipe.unet.model = core.read_model(UNET_INT8_OV_PATH)

int8_text2image_pipe.unet.request = None

prompt = "cute cat"

image = int8_text2image_pipe(prompt, num_inference_steps=1, height=512, width=512, guidance_scale=0.0, generator=np.random.RandomState(987)).images[0]

display(image)

Compiling the text_encoder to CPU ... Compiling the text_encoder_2 to CPU ... 0%| | 0/1 [00:00

import gc del int8_text2image_pipe gc.collect()

從圖片到圖片生成

from optimum.intel import OVStableDiffusionXLImg2ImgPipeline int8_image2image_pipe = OVStableDiffusionXLImg2ImgPipeline.from_pretrained(model_dir, compile=False) int8_image2image_pipe.unet.model = core.read_model(UNET_INT8_OV_PATH) int8_image2image_pipe.unet.request = None photo_prompt = "a cute cat with bow tie" photo_image = int8_image2image_pipe(photo_prompt, image=image, num_inference_steps=2, generator=np.random.RandomState(511), guidance_scale=0.0, strength=0.5).images[0] display(photo_image)

Compiling the text_encoder to CPU ... Compiling the text_encoder_2 to CPU ... Compiling the vae_encoder to CPU ... 0%| | 0/1 [00:00

我們可以對比量化后的unet 模型大小減少,可以看到量化對模型大小的壓縮是非常顯著的

from pathlib import Path model_dir = Path("./sdxl_vino_model") UNET_OV_PATH = model_dir / "unet" / "openvino_model.xml" UNET_INT8_OV_PATH = model_dir / "optimized_unet" / "openvino_model.xml" fp16_ir_model_size = UNET_OV_PATH.with_suffix(".bin").stat().st_size / 1024 quantized_model_size = UNET_INT8_OV_PATH.with_suffix(".bin").stat().st_size / 1024 print(f"FP16 model size: {fp16_ir_model_size:.2f} KB") print(f"INT8 model size: {quantized_model_size:.2f} KB") print(f"Model compression rate: {fp16_ir_model_size / quantized_model_size:.3f}")

FP16 model size: 5014578.27 KB INT8 model size: 2513501.39 KB Model compression rate: 1.995

運行下列代碼可以對量化前后模型推理速度進行簡單比較,我們可以發現速度幾乎加速了一倍,NNCF 使我們在 CPU 上生成一張圖的時間縮短到兩秒之內:

FP16 pipeline latency: 3.148 INT8 pipeline latency: 1.558 Text-to-Image generation speed up: 2.020

import time def calculate_inference_time(pipe): inference_time = [] for prompt in ['cat']*10: start = time.perf_counter() _ = pipe( prompt, num_inference_steps=1, guidance_scale=0.0, generator=np.random.RandomState(23) ).images[0] end = time.perf_counter() delta = end - start inference_time.append(delta) return np.median(inference_time)

int8_latency = calculate_inference_time(int8_text2image_pipe) text2image_pipe = OVStableDiffusionXLPipeline.from_pretrained(model_dir) fp_latency = calculate_inference_time(text2image_pipe) print(f"FP16 pipeline latency: {fp_latency:.3f}") print(f"INT8 pipeline latency: {int8_latency:.3f}") print(f"Text-to-Image generation speed up: {fp_latency / int8_latency:.3f}")

07

可交互前端demo

最后,為了方便推理使用,這里附上了gradio 前端運行demo,你可以利用他輕松生成你想要生成的圖像,并嘗試不同組合。

import gradio as gr from pathlib import Path import openvino as ov import numpy as np core = ov.Core() model_dir = Path("./sdxl_vino_model") # 如果你只有量化前模型,請使用這個地址并注釋 optimized_unet 地址: # UNET_PATH = model_dir / "unet" / "openvino_model.xml" UNET_PATH = model_dir / "optimized_unet" / "openvino_model.xml" from optimum.intel.openvino import OVStableDiffusionXLPipeline text2image_pipe = OVStableDiffusionXLPipeline.from_pretrained(model_dir) text2image_pipe.unet.model = core.read_model(UNET_PATH) text2image_pipe.unet.request = core.compile_model(text2image_pipe.unet.model) def generate_from_text(text, seed, num_steps, height, width): result = text2image_pipe(text, num_inference_steps=num_steps, guidance_scale=0.0, generator=np.random.RandomState(seed), height=height, width=width).images[0] return result with gr.Blocks() as demo: with gr.Column(): positive_input = gr.Textbox(label="Text prompt") with gr.Row(): seed_input = gr.Number(precision=0, label="Seed", value=42, minimum=0) steps_input = gr.Slider(label="Steps", value=1, minimum=1, maximum=4, step=1) height_input = gr.Slider(label="Height", value=512, minimum=256, maximum=1024, step=32) width_input = gr.Slider(label="Width", value=512, minimum=256, maximum=1024, step=32) btn = gr.Button() out = gr.Image(label="Result (Quantized)" , type="pil", width=512) btn.click(generate_from_text, [positive_input, seed_input, steps_input, height_input, width_input], out) gr.Examples([ ["cute cat", 999], ["underwater world coral reef, colorful jellyfish, 35mm, cinematic lighting, shallow depth of field, ultra quality, masterpiece, realistic", 89], ["a photo realistic happy white poodle dog playing in the grass, extremely detailed, high res, 8k, masterpiece, dynamic angle", 1569], ["Astronaut on Mars watching sunset, best quality, cinematic effects,", 65245], ["Black and white street photography of a rainy night in New York, reflections on wet pavement", 48199] ], [positive_input, seed_input]) try: demo.launch(debug=True) except Exception: demo.launch(share=True, debug=True)

08

總結

利用最新版本的OpenVINO優化,我們可以很容易實現在家用設備上高效推理圖像生成AI 的能力,加速生成式AI 在世紀場景下的落地應用;歡迎您與我們一同體驗OpenVINO與NNCF 在生成式AI 場景上的強大威力。

審核編輯:劉清

-

英特爾

+關注

關注

61文章

10301瀏覽量

180466 -

OpenVINO

+關注

關注

0文章

118瀏覽量

768

原文標題:用 OpenVINO? 在英特爾 13th Gen CPU 上運行 SDXL-Turbo 文本圖像生成模型 | 開發者實戰

文章出處:【微信號:英特爾物聯網,微信公眾號:英特爾物聯網】歡迎添加關注!文章轉載請注明出處。

發布評論請先 登錄

探索DeepSeek多樣化技術路徑,英特爾架構師用至強CPU嘗鮮

英特爾可變顯存技術讓32GB內存筆記本流暢運行Qwen 30B大模型

硬件與應用同頻共振,英特爾Day 0適配騰訊開源混元大模型

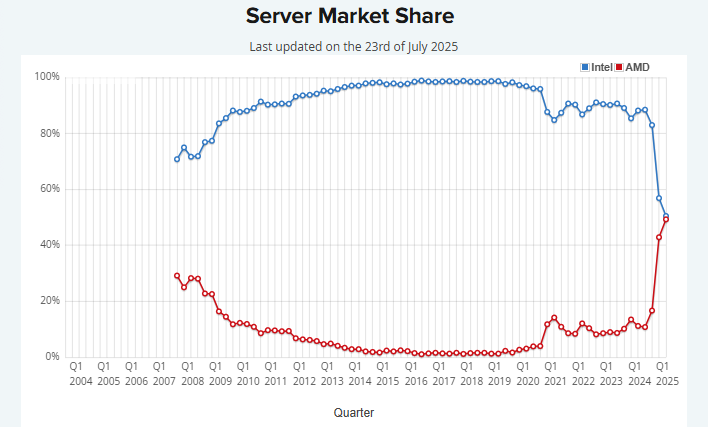

看點:AMD服務器CPU市場份額追上英特爾 華為Mate80主動散熱專利曝光

無法在NPU上推理OpenVINO?優化的 TinyLlama 模型怎么解決?

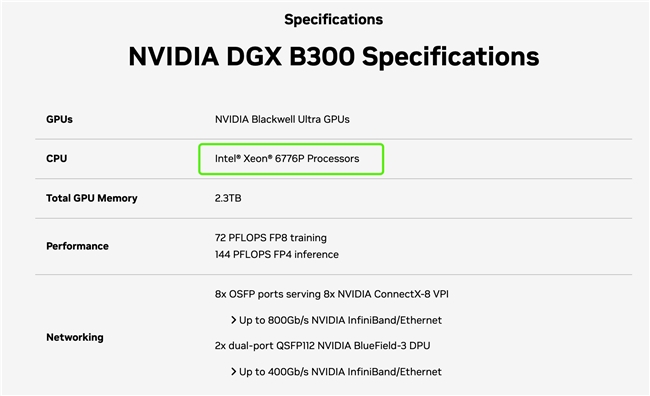

主控CPU全能選手,英特爾至強6助力AI系統高效運轉

使用英特爾? NPU 插件C++運行應用程序時出現錯誤:“std::Runtime_error at memory location”怎么解決?

使用Openvino? GenAI運行Sdxl Turbo模型時遇到錯誤怎么解決?

無法將Openvino? 2025.0與onnx運行時Openvino? 執行提供程序 1.16.2 結合使用,怎么處理?

新思科技與英特爾在EDA和IP領域展開深度合作

Intel OpenVINO? Day0 實現阿里通義 Qwen3 快速部署

更高效更安全的商務會議:英特爾聯合海信推出會議領域新型垂域模型方案

在英特爾酷睿Ultra AI PC上部署多種圖像生成模型

利用英特爾OpenVINO在本地運行Qwen2.5-VL系列模型

用OpenVINO?在英特爾13th Gen CPU上運行SDXL-Turbo文本圖像生成模型

用OpenVINO?在英特爾13th Gen CPU上運行SDXL-Turbo文本圖像生成模型

評論