Nginx+Keepalived雙主架構:消除單點故障的最佳實踐

一、概述

1.1 背景介紹

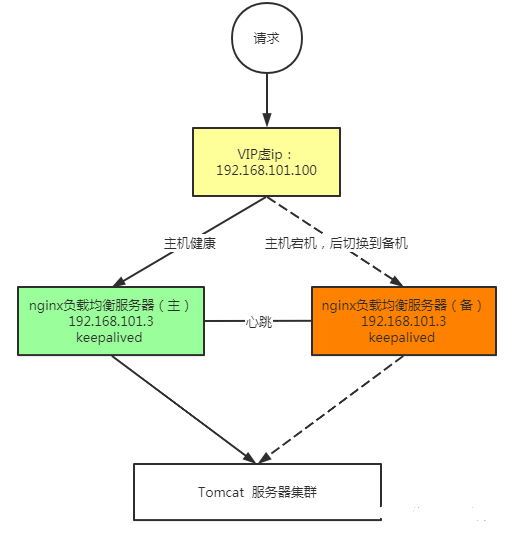

玩負載均衡的都知道,單臺 Nginx 就是個定時炸彈。跑得再穩,硬件故障、網絡抖動、內核 panic 這些事誰也說不準啥時候來。我見過太多團隊,業務量不大的時候單機裸奔,等出了事故才想起來要做高可用,然后手忙腳亂地上線,結果配置沒調好又出問題。

傳統的 Nginx + Keepalived 主備模式有個明顯缺點:備機資源閑置。一臺幾萬塊的服務器放在那里只等著主機掛掉才派上用場,這 ROI 怎么算都不劃算。雙主架構就是為了解決這個問題——兩臺機器都在干活,互為備份,任何一臺掛了另一臺頂上,資源利用率直接翻倍。

我在某金融科技公司就用這套架構抗住了雙十一的流量洪峰,兩臺 16 核 64G 的機器,日常各承擔 50% 流量,峰值時任意一臺都能扛住全部流量。關鍵是成本比買專業負載均衡設備便宜太多了。

1.2 技術特點

雙主架構的核心思路:

傳統主備模式下,VIP(虛擬 IP)只綁在主機上,備機處于等待狀態。雙主模式的思路是使用兩個 VIP,每臺機器各持有一個 VIP 作為主,同時作為對方 VIP 的備。

正常狀態: VIP1 (192.168.1.100) -> NodeA (主) <- DNS 輪詢 ? VIP2 (192.168.1.101) -> NodeB (主) <- DNS 輪詢 NodeA 故障: ? VIP1 (192.168.1.100) -> NodeB (接管) VIP2 (192.168.1.101) -> NodeB (主) NodeB 故障: VIP1 (192.168.1.100) -> NodeA (主) VIP2 (192.168.1.101) -> NodeA (接管)

技術優勢:

資源利用率翻倍:兩臺服務器都在服務,沒有閑置

故障切換快:Keepalived 秒級切換,業務感知弱

擴展性好:后續可以升級為更復雜的集群架構

成本低:用開源軟件實現商業級高可用

1.3 適用場景

這套架構適合:

中小型網站,日 PV 在 500 萬以下

對成本敏感但又需要高可用的場景

內部系統入口網關

API 網關層

不適合的場景:

超大流量(超過單機極限),建議上 LVS + Keepalived

需要復雜流量調度的場景,建議用專業 ADC 設備

1.4 環境要求

| 組件 | 版本 | 說明 |

|---|---|---|

| 操作系統 | Rocky Linux 9.3 / Ubuntu 24.04 LTS | 本文以 Rocky Linux 為例 |

| Nginx | 1.26.2 (穩定版) / 1.27.3 (主線版) | 建議用穩定版 |

| Keepalived | 2.3.1 | 2025 年最新穩定版 |

| 服務器 | 2 臺 | 配置相同,推薦 8核16G 起步 |

| 網卡 | 千兆/萬兆 | 雙網卡綁定更佳 |

| VIP | 2 個 | 與服務器同網段 |

網絡規劃示例:

| 節點 | 真實 IP | VIP | 角色 |

|---|---|---|---|

| NodeA | 192.168.1.11 | 192.168.1.100 (主) | Nginx + Keepalived |

| NodeB | 192.168.1.12 | 192.168.1.101 (主) | Nginx + Keepalived |

二、詳細步驟

2.1 準備工作

兩臺服務器都要做的基礎配置

關閉 SELinux:

# 臨時關閉 setenforce 0 # 永久關閉 sed -i's/SELINUX=enforcing/SELINUX=disabled/'/etc/selinux/config

配置防火墻:

# 開放 Nginx 端口 firewall-cmd --permanent --add-port=80/tcp firewall-cmd --permanent --add-port=443/tcp # 開放 VRRP 協議(Keepalived 通信用) firewall-cmd --permanent --add-rich-rule='rule protocol value="vrrp" accept' # 重載防火墻 firewall-cmd --reload

內核參數優化:

cat > /etc/sysctl.d/99-nginx-keepalived.conf <

配置 hosts(可選,方便管理):

cat >> /etc/hosts <

安裝 Nginx

方法一:官方倉庫安裝(推薦)

# 添加 Nginx 官方倉庫 cat > /etc/yum.repos.d/nginx.repo <

方法二:編譯安裝(需要特定模塊時)

# 安裝依賴 dnf install -y gcc make pcre2-devel openssl-devel zlib-devel libxml2-devel libxslt-devel gd-devel GeoIP-devel # 下載源碼 cd/usr/local/src wget https://nginx.org/download/nginx-1.26.2.tar.gz tar xzf nginx-1.26.2.tar.gz cdnginx-1.26.2 # 編譯配置 ./configure --prefix=/etc/nginx --sbin-path=/usr/sbin/nginx --modules-path=/usr/lib64/nginx/modules --conf-path=/etc/nginx/nginx.conf --error-log-path=/var/log/nginx/error.log --http-log-path=/var/log/nginx/access.log --pid-path=/run/nginx.pid --lock-path=/run/nginx.lock --with-threads --with-file-aio --with-http_ssl_module --with-http_v2_module --with-http_realip_module --with-http_gzip_static_module --with-http_stub_status_module --with-stream --with-stream_ssl_module --with-stream_realip_module make -j$(nproc) make install

安裝 Keepalived

# Rocky Linux 9 dnf install -y keepalived # 查看版本 keepalived --version # Keepalived v2.3.1 # 如果倉庫版本較老,可以編譯安裝 cd/usr/local/src wget https://www.keepalived.org/software/keepalived-2.3.1.tar.gz tar xzf keepalived-2.3.1.tar.gz cdkeepalived-2.3.1 ./configure --prefix=/usr/local/keepalived make -j$(nproc) make install # 創建軟鏈接 ln -sf /usr/local/keepalived/sbin/keepalived /usr/sbin/keepalived ln -sf /usr/local/keepalived/etc/keepalived /etc/keepalived

2.2 核心配置

Nginx 配置(兩臺相同)

# /etc/nginx/nginx.conf user nginx; worker_processes auto; worker_cpu_affinity auto; error_log /var/log/nginx/error.log warn; pid /run/nginx.pid; # 工作進程能打開的最大文件數 worker_rlimit_nofile 65535; events { use epoll; worker_connections 65535; multi_accept on; } http { include /etc/nginx/mime.types; default_type application/octet-stream; # 日志格式 - 包含真實客戶端 IP log_format main'$remote_addr - $remote_user [$time_local] "$request" ' '$status $body_bytes_sent "$http_referer" ' '"$http_user_agent" "$http_x_forwarded_for" ' 'rt=$request_time uct="$upstream_connect_time" ' 'uht="$upstream_header_time" urt="$upstream_response_time"'; access_log /var/log/nginx/access.log main buffer=16k flush=5s; # 基礎優化 sendfile on; tcp_nopush on; tcp_nodelay on; keepalive_timeout 65; types_hash_max_size 2048; # Gzip 壓縮 gzip on; gzip_min_length 1k; gzip_comp_level 4; gzip_types text/plain text/css application/json application/javascript text/xml application/xml application/xml+rss text/javascript; gzip_vary on; # 代理緩沖 proxy_buffer_size 16k; proxy_buffers 4 64k; proxy_busy_buffers_size 128k; # 連接超時 proxy_connect_timeout 5s; proxy_send_timeout 60s; proxy_read_timeout 60s; # 上游服務器組 upstream backend_servers { least_conn; keepalive 100; server 192.168.1.21:8080 weight=100 max_fails=3 fail_timeout=10s; server 192.168.1.22:8080 weight=100 max_fails=3 fail_timeout=10s; server 192.168.1.23:8080 weight=100 max_fails=3 fail_timeout=10s; } # 默認 server - 拒絕未知 Host server { listen 80 default_server; listen 443 ssl default_server; server_name _; ssl_certificate /etc/nginx/certs/default.crt; ssl_certificate_key /etc/nginx/certs/default.key; return444; } # 健康檢查端點 - Keepalived 用 server { listen 127.0.0.1:10080; server_name localhost; location /nginx_status { stub_status on; allow 127.0.0.1; deny all; } location /health { return200"OK "; add_header Content-Type text/plain; } } # 業務 server server { listen 80; listen 443 ssl; server_name www.example.com example.com; ssl_certificate /etc/nginx/certs/example.com.crt; ssl_certificate_key /etc/nginx/certs/example.com.key; ssl_protocols TLSv1.2 TLSv1.3; ssl_ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256; ssl_prefer_server_ciphers on; ssl_session_cache shared10m; ssl_session_timeout 10m; # HSTS add_header Strict-Transport-Security"max-age=31536000"always; # 靜態文件 location ~* .(jpg|jpeg|png|gif|ico|css|js|woff|woff2)$ { root /var/www/static; expires 30d; add_header Cache-Control"public, immutable"; } # 動態請求代理到后端 location / { proxy_pass http://backend_servers; proxy_http_version 1.1; proxy_set_header Connection""; proxy_set_header Host$host; proxy_set_header X-Real-IP$remote_addr; proxy_set_header X-Forwarded-For$proxy_add_x_forwarded_for; proxy_set_header X-Forwarded-Proto$scheme; # 健康檢查失敗時跳過問題節點 proxy_next_upstream error timeout http_500 http_502 http_503 http_504; proxy_next_upstream_tries 3; proxy_next_upstream_timeout 10s; } } include /etc/nginx/conf.d/*.conf; }

Keepalived 配置 - NodeA

# /etc/keepalived/keepalived.conf (NodeA) global_defs { router_id NGINX_HA_NODE_A # 腳本安全配置 script_user root enable_script_security # 郵件告警(可選) notification_email { ops@example.com } notification_email_from keepalived@nginx-node-a smtp_server 127.0.0.1 smtp_connect_timeout 30 } # Nginx 健康檢查腳本 vrrp_script check_nginx { script"/etc/keepalived/scripts/check_nginx.sh" interval 2 # 檢查間隔 2 秒 weight -20 # 檢查失敗時權重減 20 fall 3 # 連續 3 次失敗判定為故障 rise 2 # 連續 2 次成功判定為恢復 } # VIP1 - NodeA 為主 vrrp_instance VI_1 { state MASTER # 初始狀態為 MASTER interface eth0 # 綁定的網卡 virtual_router_id 51 # 虛擬路由 ID,同一組內必須相同 priority 150 # 優先級,MASTER 要比 BACKUP 高 advert_int 1 # VRRP 通告間隔 # 認證配置(兩節點必須一致) authentication { auth_type PASS auth_pass K33pAl1v3d_VIP1 # 密碼最多 8 位 } # 虛擬 IP 配置 virtual_ipaddress { 192.168.1.100/24 dev eth0 label eth0:vip1 } # 綁定健康檢查腳本 track_script { check_nginx } # 狀態變更通知腳本 notify_master"/etc/keepalived/scripts/notify.sh master VI_1" notify_backup"/etc/keepalived/scripts/notify.sh backup VI_1" notify_fault"/etc/keepalived/scripts/notify.sh fault VI_1" } # VIP2 - NodeA 為備 vrrp_instance VI_2 { state BACKUP # 初始狀態為 BACKUP interface eth0 virtual_router_id 52 # 注意要和 VI_1 不同 priority 100 # 優先級比 NodeB 低 advert_int 1 authentication { auth_type PASS auth_pass K33pAl1v3d_VIP2 } virtual_ipaddress { 192.168.1.101/24 dev eth0 label eth0:vip2 } track_script { check_nginx } notify_master"/etc/keepalived/scripts/notify.sh master VI_2" notify_backup"/etc/keepalived/scripts/notify.sh backup VI_2" notify_fault"/etc/keepalived/scripts/notify.sh fault VI_2" }

Keepalived 配置 - NodeB

# /etc/keepalived/keepalived.conf (NodeB) global_defs { router_id NGINX_HA_NODE_B script_user root enable_script_security notification_email { ops@example.com } notification_email_from keepalived@nginx-node-b smtp_server 127.0.0.1 smtp_connect_timeout 30 } vrrp_script check_nginx { script"/etc/keepalived/scripts/check_nginx.sh" interval 2 weight -20 fall 3 rise 2 } # VIP1 - NodeB 為備 vrrp_instance VI_1 { state BACKUP interface eth0 virtual_router_id 51 # 和 NodeA 的 VI_1 相同 priority 100 # 比 NodeA 低 advert_int 1 authentication { auth_type PASS auth_pass K33pAl1v3d_VIP1 # 密碼必須和 NodeA 一致 } virtual_ipaddress { 192.168.1.100/24 dev eth0 label eth0:vip1 } track_script { check_nginx } notify_master"/etc/keepalived/scripts/notify.sh master VI_1" notify_backup"/etc/keepalived/scripts/notify.sh backup VI_1" notify_fault"/etc/keepalived/scripts/notify.sh fault VI_1" } # VIP2 - NodeB 為主 vrrp_instance VI_2 { state MASTER interface eth0 virtual_router_id 52 priority 150 # 比 NodeA 高 advert_int 1 authentication { auth_type PASS auth_pass K33pAl1v3d_VIP2 } virtual_ipaddress { 192.168.1.101/24 dev eth0 label eth0:vip2 } track_script { check_nginx } notify_master"/etc/keepalived/scripts/notify.sh master VI_2" notify_backup"/etc/keepalived/scripts/notify.sh backup VI_2" notify_fault"/etc/keepalived/scripts/notify.sh fault VI_2" }

健康檢查腳本

# /etc/keepalived/scripts/check_nginx.sh #!/bin/bash # Nginx 健康檢查腳本 # 方法1:檢查進程是否存在 # pidof nginx > /dev/null 2>&1 || exit 1 # 方法2:檢查端口是否監聽(更準確) # ss -tlnp | grep -q ':80 ' || exit 1 # 方法3:HTTP 健康檢查(最可靠) RESPONSE=$(curl -s -o /dev/null -w"%{http_code}"http://127.0.0.1:10080/health 2>/dev/null) if["$RESPONSE"=="200"];then exit0 else exit1 fi

狀態通知腳本

# /etc/keepalived/scripts/notify.sh #!/bin/bash # Keepalived 狀態變更通知腳本 STATE=$1 # master/backup/fault VRRP_INSTANCE=$2 HOSTNAME=$(hostname) DATETIME=$(date'+%Y-%m-%d %H:%M:%S') LOG_FILE="/var/log/keepalived-notify.log" log_message() { echo"[$DATETIME]$1">>$LOG_FILE } case"$STATE"in master) log_message"[$VRRP_INSTANCE] Transition to MASTER on$HOSTNAME" # 可以在這里發送告警通知 # curl -X POST "https://your-webhook.com/alert" -d "msg=$HOSTNAME became MASTER for $VRRP_INSTANCE" ;; backup) log_message"[$VRRP_INSTANCE] Transition to BACKUP on$HOSTNAME" ;; fault) log_message"[$VRRP_INSTANCE] Transition to FAULT on$HOSTNAME" # 故障狀態,發送緊急告警 # curl -X POST "https://your-webhook.com/alert" -d "msg=$HOSTNAME FAULT for $VRRP_INSTANCE" ;; *) log_message"[$VRRP_INSTANCE] Unknown state:$STATE" ;; esac

設置腳本權限:

mkdir -p /etc/keepalived/scripts chmod +x /etc/keepalived/scripts/*.sh

2.3 啟動和驗證

啟動服務:

# 兩臺服務器都執行 # 啟動 Nginx systemctlenablenginx systemctl start nginx systemctl status nginx # 啟動 Keepalived systemctlenablekeepalived systemctl start keepalived systemctl status keepalived

驗證 VIP 綁定:

# NodeA 上查看 ip addr show eth0 | grep -E"inet.*vip" # 應該看到 192.168.1.100 # NodeB 上查看 ip addr show eth0 | grep -E"inet.*vip" # 應該看到 192.168.1.101

驗證服務可用性:

# 從客戶端測試兩個 VIP curl -I http://192.168.1.100/health curl -I http://192.168.1.101/health

測試故障切換:

# 在 NodeA 上停止 Nginx systemctl stop nginx # 等待幾秒后檢查 VIP 是否漂移到 NodeB # 在 NodeB 上執行 ip addr show eth0 | grep -E"inet.*vip" # 應該看到兩個 VIP:192.168.1.100 和 192.168.1.101 # 恢復 NodeA 的 Nginx systemctl start nginx # VIP1 應該漂移回 NodeA(搶占模式)

三、示例代碼和配置

3.1 完整配置示例

生產環境配置清單

# 目錄結構 /etc/nginx/ ├── nginx.conf ├── conf.d/ │ ├── upstream.conf # 上游服務器配置 │ ├── ssl.conf # SSL 通用配置 │ └── www.example.com.conf # 站點配置 ├── certs/ │ ├── example.com.crt │ └── example.com.key └── snippets/ ├── proxy-params.conf # 代理參數 └── security-headers.conf # 安全頭 /etc/keepalived/ ├── keepalived.conf └── scripts/ ├── check_nginx.sh └── notify.sh

upstream.conf

# /etc/nginx/conf.d/upstream.conf # Web 應用服務器 upstream web_app { least_conn; keepalive 100; keepalive_requests 1000; keepalive_timeout 60s; server 10.10.1.11:8080 weight=100 max_fails=3 fail_timeout=10s; server 10.10.1.12:8080 weight=100 max_fails=3 fail_timeout=10s; server 10.10.1.13:8080 weight=100 max_fails=3 fail_timeout=10s; server 10.10.1.14:8080 weight=100 max_fails=3 fail_timeout=10s; } # API 服務 upstream api_service { least_conn; keepalive 50; server 10.10.2.11:3000 weight=100; server 10.10.2.12:3000 weight=100; } # WebSocket 服務 upstream websocket_service { ip_hash; # WebSocket 需要會話保持 server 10.10.3.11:8000; server 10.10.3.12:8000; }

proxy-params.conf

# /etc/nginx/snippets/proxy-params.conf proxy_http_version 1.1; proxy_set_header Connection""; proxy_set_header Host$host; proxy_set_header X-Real-IP$remote_addr; proxy_set_header X-Forwarded-For$proxy_add_x_forwarded_for; proxy_set_header X-Forwarded-Proto$scheme; proxy_set_header X-Forwarded-Host$host; proxy_set_header X-Forwarded-Port$server_port; proxy_connect_timeout 5s; proxy_send_timeout 60s; proxy_read_timeout 60s; proxy_buffer_size 16k; proxy_buffers 4 64k; proxy_busy_buffers_size 128k; proxy_next_upstream error timeout http_500 http_502 http_503 http_504; proxy_next_upstream_tries 3; proxy_next_upstream_timeout 10s;

站點配置

# /etc/nginx/conf.d/www.example.com.conf # HTTP -> HTTPS 重定向 server { listen 80; server_name www.example.com example.com; return301 https://$host$request_uri; } # HTTPS 主站 server { listen 443 ssl http2; server_name www.example.com example.com; # SSL 配置 ssl_certificate /etc/nginx/certs/example.com.crt; ssl_certificate_key /etc/nginx/certs/example.com.key; ssl_protocols TLSv1.2 TLSv1.3; ssl_ciphers ECDHE-ECDSA-AES128-GCM-SHA256ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384; ssl_prefer_server_ciphers on; ssl_session_cache shared10m; ssl_session_timeout 1d; ssl_session_tickets off; # 安全頭 add_header Strict-Transport-Security"max-age=31536000; includeSubDomains"always; add_header X-Frame-Options"SAMEORIGIN"always; add_header X-Content-Type-Options"nosniff"always; add_header X-XSS-Protection"1; mode=block"always; # 靜態文件 location /static/ { alias/var/www/static/; expires 30d; add_header Cache-Control"public, immutable"; # 靜態文件直接返回,不走代理 try_files$uri=404; } # API 接口 location /api/ { include /etc/nginx/snippets/proxy-params.conf; proxy_pass http://api_service; } # WebSocket location /ws/ { proxy_pass http://websocket_service; proxy_http_version 1.1; proxy_set_header Upgrade$http_upgrade; proxy_set_header Connection"upgrade"; proxy_set_header Host$host; proxy_set_header X-Real-IP$remote_addr; # WebSocket 超時要設長一些 proxy_read_timeout 3600s; proxy_send_timeout 3600s; } # 默認走 Web 應用 location / { include /etc/nginx/snippets/proxy-params.conf; proxy_pass http://web_app; } # 健康檢查端點(給上層負載均衡用) location /health { access_log off; return200"OK "; add_header Content-Type text/plain; } }

3.2 實際應用案例

案例一:多站點雙主架構

某公司有三個域名需要高可用:

# DNS 配置(兩個 VIP 都解析到每個域名) www.site-a.com A 192.168.1.100 www.site-a.com A 192.168.1.101 www.site-b.com A 192.168.1.100 www.site-b.com A 192.168.1.101 api.company.com A 192.168.1.100 api.company.com A 192.168.1.101

Nginx 配置多個 server 塊,每個域名對應不同的后端:

# /etc/nginx/conf.d/multi-site.conf # Site A server { listen 80; server_name www.site-a.com; location / { proxy_pass http://site_a_backend; } } # Site B server { listen 80; server_name www.site-b.com; location / { proxy_pass http://site_b_backend; } } # API server { listen 80; server_name api.company.com; location / { proxy_pass http://api_backend; } }

案例二:非搶占式雙主

有些場景下不希望 VIP 來回漂移(減少切換帶來的抖動),可以配置非搶占模式:

# NodeA - VI_1 配置 vrrp_instance VI_1 { state BACKUP # 兩邊都設置為 BACKUP nopreempt # 關鍵:禁止搶占 interface eth0 virtual_router_id 51 priority 150 # 優先級高的會先成為 MASTER advert_int 1 authentication { auth_type PASS auth_pass K33pAl1v3d } virtual_ipaddress { 192.168.1.100/24 } track_script { check_nginx } }# NodeB - VI_1 配置 vrrp_instance VI_1 { state BACKUP nopreempt interface eth0 virtual_router_id 51 priority 100 advert_int 1 authentication { auth_type PASS auth_pass K33pAl1v3d } virtual_ipaddress { 192.168.1.100/24 } track_script { check_nginx } }

這樣配置后,即使 NodeA 恢復了,VIP 也不會從 NodeB 搶回來,減少不必要的切換。

案例三:與云廠商 SLB 配合

在云環境下,通常前面還有云廠商的 SLB(如 AWS ALB、阿里云 SLB)。這時候雙主架構變成:

SLB (云廠商) ├── NodeA (真實 IP) └── NodeB (真實 IP)

這種場景不需要 Keepalived 做 VIP 漂移,但可以利用其健康檢查能力:

# 簡化版 keepalived.conf - 只做健康檢查 global_defs { router_id NGINX_HEALTH } vrrp_script check_nginx { script"/etc/keepalived/scripts/check_nginx.sh" interval 2 weight -20 fall 3 rise 2 } # 使用單播避免影響云網絡 vrrp_instance VI_HEALTH { state BACKUP interface eth0 virtual_router_id 99 priority 100 advert_int 1 nopreempt # 單播配置 unicast_src_ip 10.0.1.11 unicast_peer { 10.0.1.12 } track_script { check_nginx } notify_master"/etc/keepalived/scripts/notify_slb.sh register" notify_fault"/etc/keepalived/scripts/notify_slb.sh deregister" }

notify_slb.sh 腳本通過云 API 來注冊/注銷節點。

四、最佳實踐和注意事項

4.1 最佳實踐

1. 健康檢查要檢查業務,不要只檢查進程

我見過很多配置只檢查 Nginx 進程是否存在,這是不夠的。Nginx 進程在,但后端全掛了,或者配置錯誤導致返回 500,這種情況進程檢查是發現不了的。

# 更完善的健康檢查腳本 #!/bin/bash # 檢查 Nginx 進程 if! pidof nginx > /dev/null;then echo"Nginx process not found" exit1 fi # 檢查端口監聽 if! ss -tlnp | grep -q':80 ';then echo"Port 80 not listening" exit1 fi # 檢查 HTTP 響應 HTTP_CODE=$(curl -s -o /dev/null -w"%{http_code}" --connect-timeout 2 --max-time 5 http://127.0.0.1:10080/health 2>/dev/null) if["$HTTP_CODE"!="200"];then echo"Health check returned$HTTP_CODE" exit1 fi # 可選:檢查后端連通性 BACKEND_STATUS=$(curl -s -o /dev/null -w"%{http_code}" --connect-timeout 2 --max-time 5 http://127.0.0.1/api/health 2>/dev/null) if["$BACKEND_STATUS"-ge 500 ];then echo"Backend unhealthy, status:$BACKEND_STATUS" exit1 fi exit0

2. 合理設置優先級差值

優先級差值要大于 weight 的絕對值,否則可能出現腦裂:

# 假設 weight = -20 # NodeA priority = 150 # NodeB priority = 100 # 差值 = 50 > 20,OK # 如果設置成 # NodeA priority = 110 # NodeB priority = 100 # 差值 = 10 < 20 # 當 NodeA 健康檢查失敗時,priority 變成 90,低于 NodeB 的 100 # VIP 會漂移到 NodeB,但如果此時 NodeA 恢復了 # priority 又變回 110,VIP 又漂移回來 # 導致 VIP 來回跳

3. 配置組播/單播

默認 Keepalived 使用 224.0.0.18 組播地址通信。在某些網絡環境下組播不通,需要改用單播:

vrrp_instance VI_1 { state MASTER interface eth0 virtual_router_id 51 priority 150 advert_int 1 # 本機 IP unicast_src_ip 192.168.1.11 # 對端 IP unicast_peer { 192.168.1.12 } authentication { auth_type PASS auth_pass K33pAl1v3d } virtual_ipaddress { 192.168.1.100/24 } }

4. 日志分離

Keepalived 默認日志混在系統日志里,建議單獨配置:

# /etc/rsyslog.d/keepalived.conf if$programnamestartswith'Keepalived'then/var/log/keepalived.log & stop

4.2 注意事項

| 問題類型 | 現象 | 原因分析 | 解決方案 |

|---|---|---|---|

| 腦裂 | 兩臺都持有同一個 VIP | 網絡故障導致心跳檢測失敗 | 1. 檢查網絡連通性 2. 使用單播替代組播 3. 增加心跳檢測網絡 |

| VIP 不漂移 | 主機掛了 VIP 不切換 | 1. 防火墻阻止 VRRP 2. virtual_router_id 不一致 | 1. 開放 VRRP 協議 2. 檢查配置一致性 |

| VIP 來回跳 | 頻繁切換 | 1. 優先級差值設置不當 2. 網絡抖動 | 1. 增大優先級差值 2. 增加 fall/rise 次數 |

| 切換后服務不可用 | VIP 切換成功但訪問失敗 | 1. 連接跟蹤殘留 2. ARP 緩存 | 1. 切換時清理 conntrack 2. 發送 GARP |

| 健康檢查誤判 | 服務正常但被判定故障 | 檢查腳本超時或邏輯錯誤 | 1. 增加超時時間 2. 優化檢查邏輯 |

踩坑經歷:

有一次生產環境出現了詭異的問題——VIP 切換了,但客戶端還是連不上。排查發現是上層交換機的 ARP 緩存沒有及時更新。解決方案是在 notify 腳本里主動發送免費 ARP:

# 在 notify.sh 的 master 分支里添加 arping -c 3 -A -I eth0 192.168.1.100

五、故障排查和監控

5.1 故障排查

Keepalived 狀態檢查:

# 查看 Keepalived 進程 ps aux | grep keepalived # 查看 VIP 綁定情況 ip addr show | grep -E"inet.*vip" # 查看 Keepalived 日志 journalctl -u keepalived -f # 或者 tail -f /var/log/keepalived.log # 查看 VRRP 狀態 cat /proc/net/netfilter/nf_conntrack | grep vrrp

網絡連通性檢查:

# 檢查兩節點間的網絡 ping -c 3 192.168.1.12 # 檢查 VRRP 組播是否通 tcpdump -i eth0 vrrp -nn # 檢查防火墻規則 iptables -L -n | grep vrrp firewall-cmd --list-all

Nginx 狀態檢查:

# 檢查 Nginx 進程 nginx -t systemctl status nginx # 檢查端口監聽 ss -tlnp | grep nginx # 檢查連接數 ss -s # 檢查 stub_status curl http://127.0.0.1:10080/nginx_status

手動觸發故障切換測試:

# 方法1:停止 Nginx systemctl stop nginx # 方法2:停止 Keepalived systemctl stop keepalived # 方法3:模擬網絡故障 iptables -A INPUT -p vrrp -j DROP # 恢復 iptables -D INPUT -p vrrp -j DROP

5.2 性能監控

Nginx 監控指標:

# 使用 nginx-module-vts 或者簡單的 stub_status

location /nginx_status {

stub_status on;

allow 127.0.0.1;

deny all;

}

Prometheus + Grafana 監控:

# 安裝 nginx-prometheus-exporter docker run -d -p 9113:9113 nginx/nginx-prometheus-exporter:latest -nginx.scrape-uri=http://192.168.1.11:10080/nginx_status

關鍵監控指標:

# Nginx 指標 nginx_connections_active:當前活躍連接數 nginx_connections_reading:正在讀取的連接數 nginx_connections_writing:正在寫入的連接數 nginx_connections_waiting:空閑等待的連接數 nginx_http_requests_total:請求總數 # Keepalived 指標(需要自己采集) keepalived_vrrp_state:VRRP狀態(1=MASTER,2=BACKUP,3=FAULT) keepalived_vrrp_transitions:狀態切換次數 # 報警規則 -活躍連接數超過worker_connections*0.8 -VRRP狀態變化 -Nginx進程不存在 -健康檢查失敗

監控腳本示例:

#!/bin/bash

# /usr/local/bin/nginx-keepalived-monitor.sh

# 采集 Nginx 狀態

NGINX_STATUS=$(curl -s http://127.0.0.1:10080/nginx_status)

ACTIVE=$(echo"$NGINX_STATUS"| grep'Active'| awk'{print $3}')

READING=$(echo"$NGINX_STATUS"| grep'Reading'| awk'{print $2}')

WRITING=$(echo"$NGINX_STATUS"| grep'Writing'| awk'{print $4}')

# 采集 Keepalived 狀態

VIP1_STATUS="backup"

VIP2_STATUS="backup"

ifip addr show eth0 | grep -q"192.168.1.100";then

VIP1_STATUS="master"

fi

ifip addr show eth0 | grep -q"192.168.1.101";then

VIP2_STATUS="master"

fi

# 輸出 Prometheus 格式

echo"# HELP nginx_connections_active Active connections"

echo"# TYPE nginx_connections_active gauge"

echo"nginx_connections_active$ACTIVE"

echo"# HELP keepalived_vip1_is_master VIP1 master status"

echo"# TYPE keepalived_vip1_is_master gauge"

if["$VIP1_STATUS"=="master"];then

echo"keepalived_vip1_is_master 1"

else

echo"keepalived_vip1_is_master 0"

fi

5.3 備份與恢復

配置備份腳本:

#!/bin/bash

# /usr/local/bin/backup-nginx-keepalived.sh

BACKUP_DIR="/backup/nginx-keepalived"

DATE=$(date +%Y%m%d_%H%M%S)

HOSTNAME=$(hostname)

mkdir -p${BACKUP_DIR}

# 備份 Nginx 配置

tar czf${BACKUP_DIR}/${HOSTNAME}_nginx_${DATE}.tar.gz

/etc/nginx/

/etc/sysctl.d/99-nginx-keepalived.conf

# 備份 Keepalived 配置

tar czf${BACKUP_DIR}/${HOSTNAME}_keepalived_${DATE}.tar.gz

/etc/keepalived/

# 備份證書

tar czf${BACKUP_DIR}/${HOSTNAME}_certs_${DATE}.tar.gz

/etc/nginx/certs/

# 保留 30 天

find${BACKUP_DIR}-typef -mtime +30 -delete

echo"Backup completed:${DATE}"

快速恢復流程:

# 1. 安裝軟件 dnf install -y nginx keepalived # 2. 恢復配置 tar xzf nginx_backup.tar.gz -C / tar xzf keepalived_backup.tar.gz -C / # 3. 驗證配置 nginx -t keepalived -t # 4. 應用內核參數 sysctl -p /etc/sysctl.d/99-nginx-keepalived.conf # 5. 啟動服務 systemctl start nginx systemctl start keepalived # 6. 驗證狀態 curl -I http://127.0.0.1/health ip addr show eth0 | grep vip

六、總結

6.1 技術要點回顧

雙主架構的核心是兩個 VIP,每臺機器各持有一個,互為備份

Keepalived 的 priority 差值要大于健康檢查的 weight 絕對值

健康檢查腳本要檢查業務層面,不能只檢查進程

非搶占模式可以減少 VIP 來回漂移帶來的抖動

在云環境下注意組播可能不通,要改用單播

6.2 進階學習方向

三節點架構:引入仲裁節點解決腦裂問題

跨機房高可用:BGP + ECMP 實現跨機房流量調度

與 Consul/etcd 集成:實現服務發現和動態配置

Nginx Plus:商業版本提供更強大的健康檢查和監控

6.3 參考資料

Nginx 官方文檔: https://nginx.org/en/docs/

Keepalived 官方文檔: https://www.keepalived.org/manpage.html

Keepalived GitHub: https://github.com/acassen/keepalived

Linux Virtual Server: http://www.linuxvirtualserver.org/

附錄

A. 命令速查表

| 命令 | 說明 |

|---|---|

| nginx -t | 檢查 Nginx 配置語法 |

| nginx -s reload | 平滑重載配置 |

| systemctl status keepalived | 查看 Keepalived 狀態 |

| ip addr show eth0 | 查看 VIP 綁定情況 |

| tcpdump -i eth0 vrrp -nn | 抓取 VRRP 心跳包 |

| journalctl -u keepalived -f | 實時查看 Keepalived 日志 |

|

arping -c 3 -A -I eth0 |

發送免費 ARP |

| ss -tlnp | grep nginx | 查看 Nginx 監聽端口 |

| curl http://127.0.0.1:10080/nginx_status | 查看 Nginx 狀態 |

B. 配置參數詳解

Keepalived 關鍵參數:

| 參數 | 說明 | 建議值 |

|---|---|---|

| state | 初始狀態 | MASTER/BACKUP |

| priority | 優先級 | 1-255,MASTER 要比 BACKUP 高 |

| advert_int | 心跳間隔 | 1 秒 |

| virtual_router_id | 虛擬路由 ID | 1-255,同一組必須相同 |

| nopreempt | 禁止搶占 | 穩定性要求高時啟用 |

| weight | 健康檢查權重 | 負數,絕對值小于 priority 差值 |

| fall | 失敗判定次數 | 2-3 |

| rise | 恢復判定次數 | 2 |

| interval | 檢查間隔 | 2 秒 |

Nginx 負載均衡參數:

| 參數 | 說明 | 建議值 |

|---|---|---|

| weight | 權重 | 默認 1 |

| max_fails | 最大失敗次數 | 2-3 |

| fail_timeout | 失敗后暫停時間 | 10-30s |

| keepalive | 長連接數 | 50-100 |

| keepalive_requests | 單連接最大請求數 | 1000 |

| keepalive_timeout | 長連接超時 | 60s |

C. 術語表

| 術語 | 解釋 |

|---|---|

| VIP | Virtual IP,虛擬 IP 地址,用于高可用切換 |

| VRRP | Virtual Router Redundancy Protocol,虛擬路由冗余協議 |

| MASTER | 主節點,持有 VIP 的節點 |

| BACKUP | 備節點,等待接管 VIP 的節點 |

| Priority | 優先級,決定誰成為 MASTER |

| Preempt | 搶占模式,高優先級節點恢復后搶回 VIP |

| Split-Brain | 腦裂,兩個節點都認為自己是 MASTER |

| GARP | Gratuitous ARP,免費 ARP,用于通知網絡 VIP 漂移 |

| Health Check | 健康檢查,檢測服務是否正常 |

| Failover | 故障切換,主節點故障時切換到備節點 |

-

內核

+關注

關注

4文章

1468瀏覽量

42874 -

負載均衡

+關注

關注

0文章

133瀏覽量

12875 -

nginx

+關注

關注

0文章

186瀏覽量

13113

原文標題:Nginx+Keepalived雙主架構:消除單點故障的最佳實踐

文章出處:【微信號:magedu-Linux,微信公眾號:馬哥Linux運維】歡迎添加關注!文章轉載請注明出處。

發布評論請先 登錄

解析keepalived+nginx實現高可用方案技術

大數據技術ZooKeeper應用——解決分布式系統單點故障

評估殘余故障率λRF、傳感器、單點故障率λSPF和單點故障度量MSPFM的方法

如何用旁路工具提升網絡可用性?

Keepalived工作原理簡介

搭建Keepalived+Lvs+Nginx高可用集群負載均衡

Jtti:如何在服務器擴展時避免單點故障?有哪些常見的高可用性策略?

華納云:服務器擴展中如何避免單點故障

Nginx在企業環境中的調優策略

疆鴻智能PROFIBUS集線器:破解天然氣增壓站網絡單點故障難題

Nginx+Keepalived雙主架構消除單點故障的最佳實踐

Nginx+Keepalived雙主架構消除單點故障的最佳實踐

評論